2018

Emotion Ai Project

Emotion Ai Project

We took the challenge of enhancing today's computer interfaces through the understanding of human emotions. We believe that humans, unlike any current computer model, do not react in a binary fashion; a yes or a no may have varying degrees of positive or negative connotations. VR provided the best controlled environment, that allowed us to understand and create a predictive model. Ultimately the understandings could be applied to external interfaces such as voice assistances, facial recognition, and gesture interfaces. VR is just the first step into a much more vast application.

Project Pitch Materials

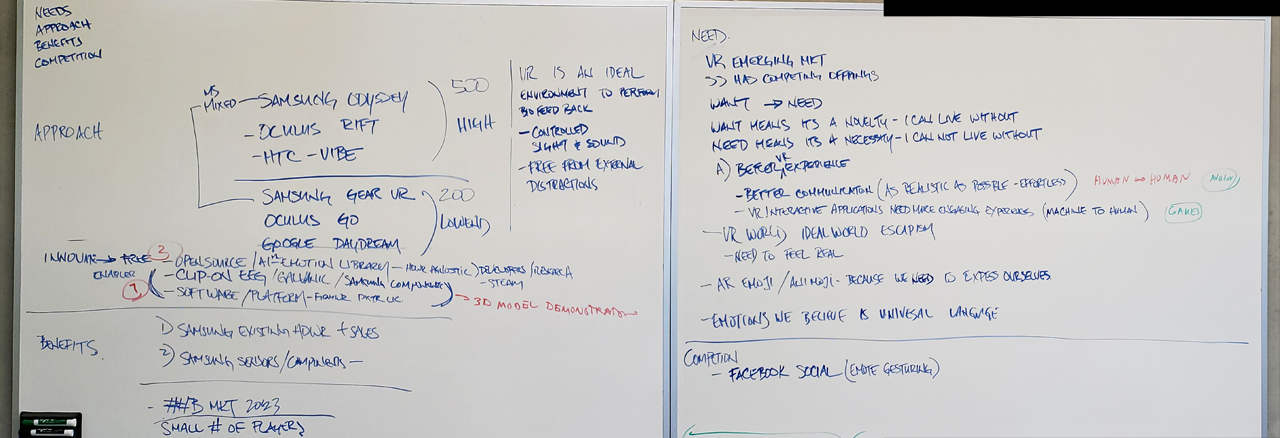

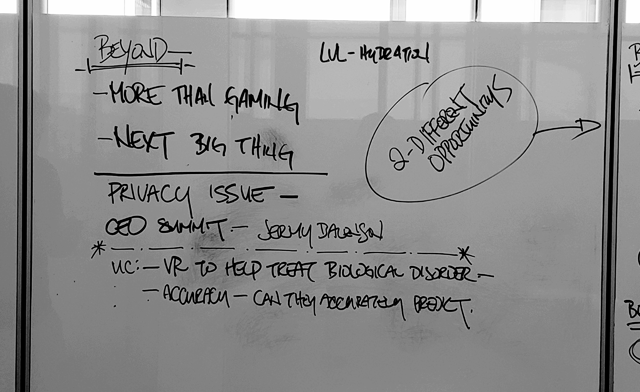

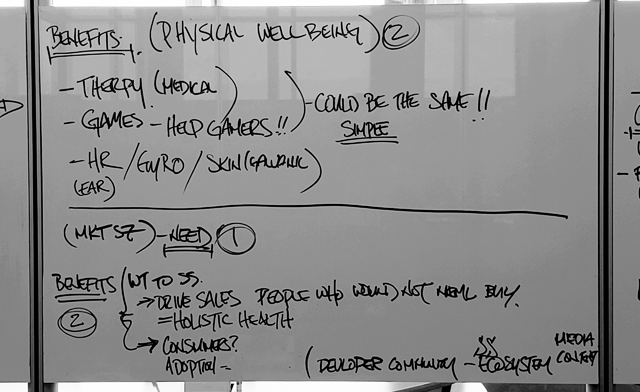

Modeling NABC

Mockups

Proof of Concept